Statistical Experiments

- A statistical experiment consists of the following data:

- A set of iid random variables with a common (unknown) distribution .

- A (parametric, identifiable) statistical model for which is well-specified (i.e., there exists such that ).

- A partition of into disjoint sets and , which represent the null and alternative hypotheses, respectively.

- Note that is fixed (i.e., non-random). The purpose of a statistical experiment is not to determine its location, but rather reject the assertion that it lies in .

- Given an observation , we often formulate the null and alternative hypotheses as a function of , i.e., we write TODO: Think about this first.

Tests and Errors

-

A hypothesis test is a function

-

The Type I Error associated to is

This represents the probability of rejecting the null hypothesis, given that it is true. Note that in most examples, is a singleton (in these cases, is a single number), but this is not a guarantee.

-

The Type II Error associated to is

This likewise represents the probability of failing to reject the null hypothesis, given that it is false.

-

The Power of a statistical test is 1 minus the type II error. I think this is wrong. It should be 1 minus the infemum of type II error.

The Level of a Test

-

An important point about hypothesis testing: While we always want to strike a balance between type I and II errors, we will usually specify a Level for our test, which represents a certain amount of type I error we are willing to tolerate. So in this since, we will generally favor minimizing type II error, given a certain level. The level of a test is denoted

We will often say a test rejects at level .

-

Often, we will not understand the distribution of our test statistic directly, but rather only understand its asymptotic distribution (i.e., considering sample size in the limit). In this case, we will specify an Asymptotic Level of a statistical test, also denoted by

The -value of a Test

-

In general, a test has the form

where is called the test statistic, which in our current examples, will take the form

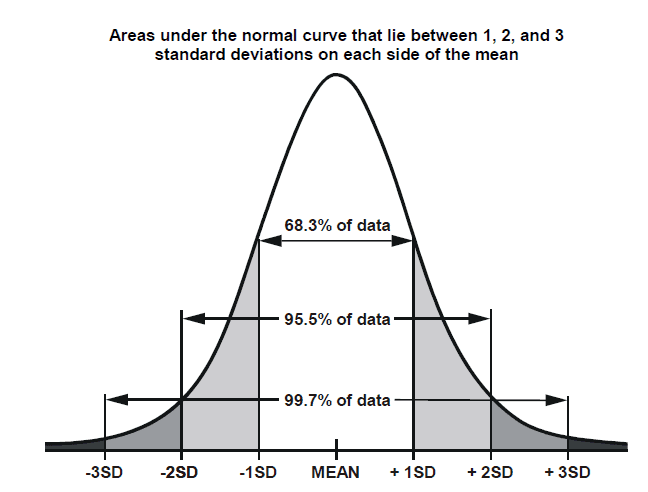

where is an estimator for , and which converges in distribution to something familiar (so far in our examples, this is usually ). What this means is that by choosing , we can decide the asymptotic level . Thus, most of the work will be finding the test statistic .

-

In most examples, the null hypothesis is a singleton (for two-sided tests) or a half interval (for one-sided tests). However, this does not generalize well to more abstract null hypotheses.

-

The Asymptotic -value of a test is the smallest asymptotic level at which rejects , given an observation . Equivalently, this is the probability (under the nearest point of the null hypothesis) of an event at least as extreme as the observation.

-

I should note that I have added some of my own interpretation here. It's not clear from the lectures that -value makes no sense without an a priori observation, but this is the only way I can make sense of the definition, whereas everything else makes sense as a function of the observation or parameter space.

-

In most straightforward statistical tests, we don't usually use asymptotic levels or -values. Instead, the exact distribution of will be known, e.g., from a t-distribution or -distribution.

An example

Recall the kiss example: We observe people kissing and record whether they turn their heads to the right or left. We model these observations with a Bernoulli distribution, with null hypothesis and alternative hypothesis . Let

and let

Then by the Central Limit Theorem (assuming the null hypothesis), has asymptotic level :

Suppose , . Then . Let be the value such that , so that has asymptotic level . Then is the -value of this test. Since in distribution, we can compute the -value as .