Adding notes I took while trying to understand how to AB test an intervention on churn rate.

Setup

The Scenario

Suppose we are a subscription service, and we want to A/B test a new campaign to increase usage among low activity viewers. Suppose also we do not have good information on expected return rate from month to month (e.g, because we have not collected data previously to establish a baseline population return rate). In this scenario, we will need to use separate test and control groups, and test the difference between test and control group's return rates after the intervention. To make things concrete, let's say we measure the return rate at the end of every month, and the intervention is a push notification or something that fires sometime during the month of study. Moreover, and these devices have been chosen because they are at a relatively low level of activity.

Notation

- Let the subscript denote that a quantity is calculated for the test or control group, respectively.

- Let denote return rate.

- Let .

- Let denote the observed number of returners in group .

- Let be the sample size for the test and control groups (i.e., there are total participants).

- Let and be the usual definitions of type I and II error, respectively.

- For any real number , let be the usual standard normal cutoff quantile.

Objective

Note that . Assuming is large, then . Since we are dealing with a lot of customers and there is little risk in using large samples, we may assume is large and the differences are negligible. Therefore, we can use a suitable statistic to test the difference , which is approximately distributed as .

Since we hope to reduce return with this campaign, let's assume the null hypothesis is that , and the alternative hypothesis is .

Calculating the -Statistic

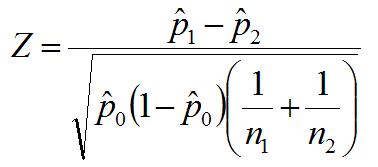

Let

Assuming the null hypothesis, we reject if . Under the null hypothesis, . In fact, we can assume under the null, so that our rejection region is as small as possible. In other words, define

and define the rejection region as

Deciding Sample Size

Power is defined to be

But this expression is true if and only if the right hand side is . Therefore, we have